Qwen 2.5 Coder 32B Instruct is a powerhouse for coding workloads - offering a huge memory to process long code, documentation, or discussion histories, with the ability to generate up to 8,000 output tokens.

It’s tuned specifically for coding excellence, matching or exceeding GPT‑4o benchmarks on generation, debugging, and logic.

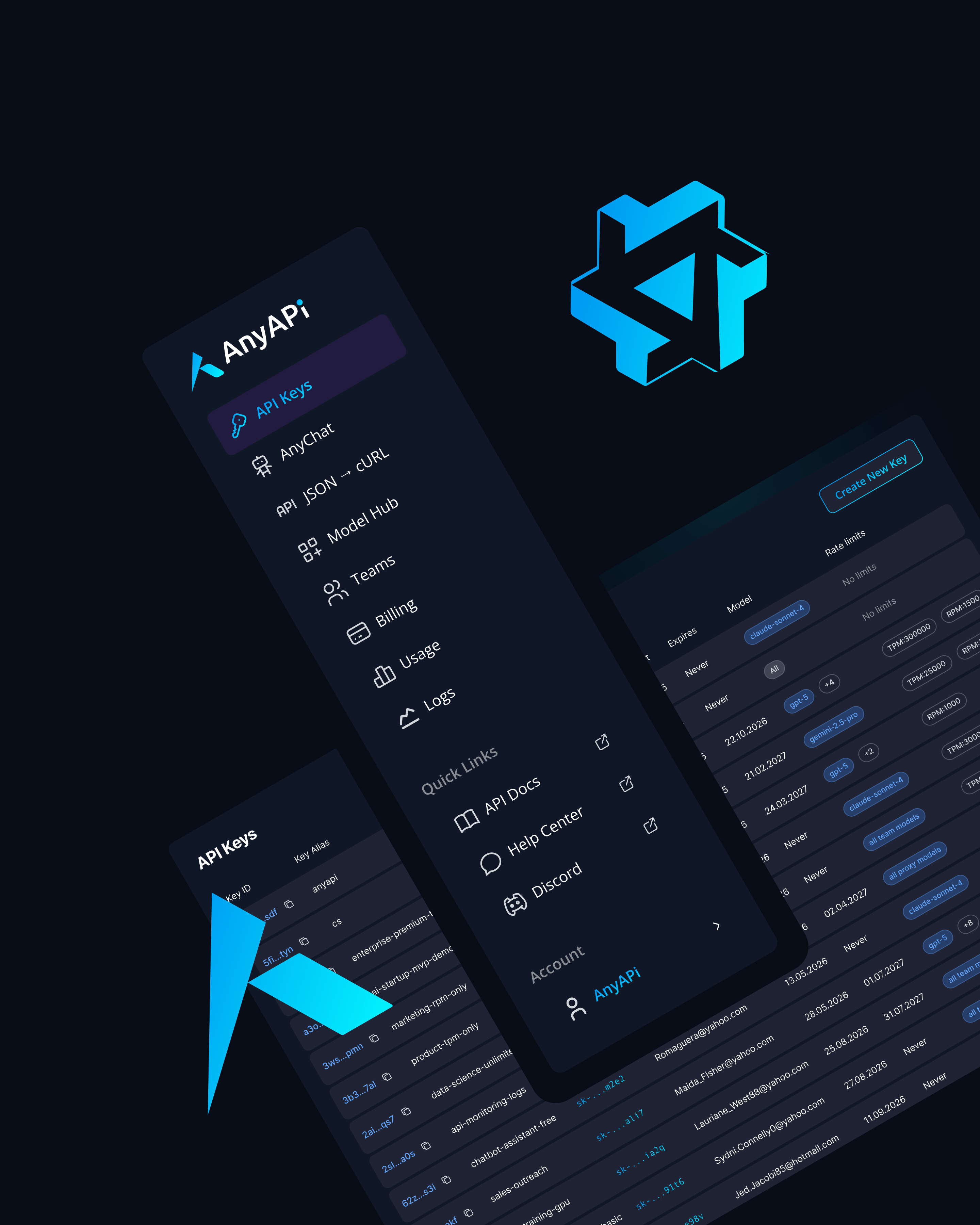

%201.svg)