Multimodal Vision-Language Model with Advanced Reasoning via API

Qwen2.5 VL 72B Instruct is a state-of-the-art multimodal vision-language model developed by Alibaba Cloud's Qwen team. Released in December 2024 as part of the Qwen2.5 model family, this flagship instruction-tuned model represents a significant advancement in multimodal AI capabilities. The model combines sophisticated visual understanding with powerful text generation, making it relevant for production environments that require both image analysis and natural language processing.

As a flagship-tier model in the Qwen ecosystem, Qwen2.5 VL 72B Instruct is positioned for developers and enterprises building complex AI systems that demand high-quality multimodal reasoning. Its architecture supports real-time applications, generative AI workflows, and production-grade deployments across industries ranging from e-commerce to healthcare and autonomous systems. For teams integrating large language models into their infrastructure, this model offers a compelling balance of performance, versatility, and instruction-following precision.

Key Features of Qwen2.5 VL 72B Instruct

Advanced Multimodal Understanding

Qwen2.5 VL 72B Instruct excels at processing both visual and textual inputs simultaneously, enabling it to analyze images, charts, diagrams, and documents while generating contextually relevant text responses. This capability makes it suitable for applications requiring visual question answering, image captioning, and document intelligence.

Instruction-Following and Alignment

The model has been fine-tuned specifically for instruction-following tasks, ensuring high adherence to user prompts and system-level directives. Safety alignment measures have been integrated to reduce harmful outputs and improve reliability in customer-facing applications.

Multilingual Language Support

Qwen2.5 VL 72B Instruct supports dozens of languages with strong performance in English, Chinese, and other major languages. This multilingual capability allows developers to build global applications without requiring separate models for different regions.

High-Quality Reasoning and Code Generation

Beyond visual tasks, the model demonstrates strong reasoning abilities across mathematical problem-solving, logical inference, and multi-step analysis. It also performs well on code generation tasks, supporting multiple programming languages and understanding complex codebases.

Low-Latency Performance for Real-Time Apps

Despite its 72 billion parameter scale, Qwen2.5 VL 72B Instruct is optimized for efficient inference, making it viable for real-time applications when deployed on appropriate infrastructure. Developers can expect competitive latency for multimodal tasks compared to similar-scale models.

Use Cases for Qwen2.5 VL 72B Instruct

Intelligent Document Processing and Analysis

Organizations can integrate Qwen2.5 VL 72B Instruct API to extract structured information from invoices, receipts, forms, and contracts. The model's ability to understand document layouts, tables, and visual elements enables automated data entry, compliance checking, and document classification across legal tech, finance, and healthcare sectors.

Visual Question Answering for E-Commerce and Retail

E-commerce platforms can deploy the model to answer customer questions about product images, provide styling recommendations based on visual inputs, and generate detailed product descriptions from photographs. This reduces manual content creation workload and improves customer engagement through interactive visual experiences.

AI-Powered Educational Tools and Tutoring

Educational technology companies can use Qwen2.5 VL 72B Instruct for applications that explain diagrams, solve math problems from images, and provide step-by-step reasoning for visual learning materials. The model's multilingual support enables global reach for adaptive learning platforms.

Code Generation and Technical Documentation

Development teams can integrate the model into IDEs and documentation tools to generate code from flowcharts, explain architectural diagrams, and create technical documentation from screenshots or wireframes. Its strong coding capabilities support multiple programming languages and frameworks.

Customer Support Automation with Visual Context

Support systems can leverage Qwen2.5 VL 72B Instruct to handle tickets that include screenshots, error messages, or product photos. The model can diagnose issues from visual inputs, provide troubleshooting steps, and escalate complex cases with contextual understanding, reducing response times and improving resolution rates.

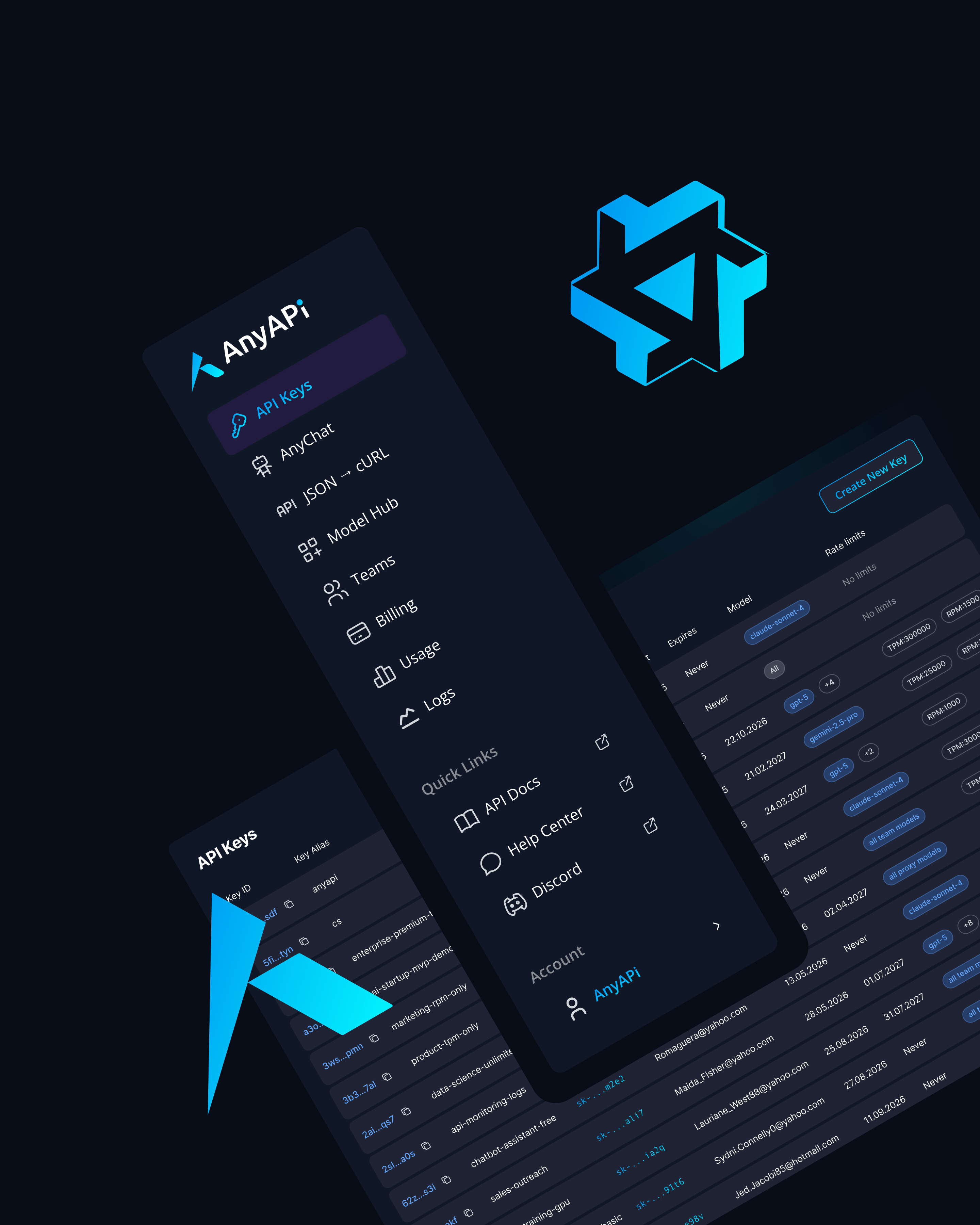

Why Use Qwen2.5 VL 72B Instruct via AnyAPI.ai

AnyAPI.ai provides streamlined access to Qwen2.5 VL 72B Instruct alongside dozens of other leading large language models through a single unified API. Developers avoid the complexity of managing multiple vendor relationships, authentication systems, and billing processes. With one API key, teams can integrate Qwen2.5 VL 72B Instruct and switch between models without rewriting application logic.

The platform offers one-click onboarding with no vendor lock-in, allowing organizations to experiment with different models and scale based on actual usage. Usage-based billing ensures cost efficiency, charging only for consumed tokens rather than requiring upfront commitments or minimum spends.

AnyAPI.ai delivers production-grade infrastructure with high availability, automatic failover, and monitoring tools that provide visibility into API performance and usage patterns. Unlike alternatives such as OpenRouter and AIMLAPI, AnyAPI.ai emphasizes better provisioning with dedicated support for enterprise customers, unified access across model families, and analytics dashboards that help teams optimize their LLM integration strategy.

For developers building multimodal applications, AnyAPI.ai simplifies the technical challenges of working with vision-language models like Qwen2.5 VL 72B Instruct by handling image encoding, request formatting, and error handling at the infrastructure level.

Start Using Qwen2.5 VL 72B Instruct via API Today

Qwen2.5 VL 72B Instruct represents a powerful option for developers and enterprises building sophisticated multimodal AI applications. Its combination of visual understanding, strong reasoning, multilingual support, and instruction-following precision makes it valuable for startups scaling AI products and ML teams deploying production systems.

By accessing Qwen2.5 VL 72B Instruct through AnyAPI.ai, you eliminate infrastructure complexity and gain flexibility to experiment with multiple models through one unified interface. Whether you are building document intelligence systems, visual chatbots, or AI-powered development tools, this model delivers the capabilities needed for modern generative AI applications.

Integrate Qwen2.5 VL 72B Instruct via AnyAPI.ai and start building today. Sign up, get your API key, and launch multimodal AI features in minutes without vendor lock-in or complex setup processes.