Enterprise-Grade Mixture-of-Experts LLM API for Scalable AI Development

Mixtral 8x22B Instruct is a state-of-the-art large language model developed by Mistral AI, representing one of the most powerful open-weight models available for production deployment. Released in April 2024, this model employs a Sparse Mixture-of-Experts architecture that activates only a subset of its 141 billion total parameters per token, making it exceptionally efficient while maintaining flagship-level performance.

As part of Mistral AI's growing model family, Mixtral 8x22B Instruct sits at the top tier, offering capabilities that rival proprietary models while maintaining the flexibility and transparency of an open-weight approach. The model is specifically instruction-tuned for following complex directives, making it particularly relevant for developers building production-grade AI applications, real-time conversational systems, and generative AI tools that require both intelligence and cost efficiency.

For startups scaling AI-based products and ML infrastructure teams, Mixtral 8x22B Instruct delivers a compelling balance of performance, cost, and deployment flexibility that makes it suitable for everything from customer-facing chatbots to internal automation workflows.

Key Features of Mixtral 8x22B Instruct

Sparse Mixture-of-Experts Architecture

The model utilizes eight expert networks with only two activated per token, resulting in 39 billion active parameters during inference. This design dramatically reduces computational costs while preserving quality, making it ideal for teams managing large-scale deployments with budget constraints.

Superior Reasoning and Instruction Following

Mixtral 8x22B Instruct demonstrates exceptional performance on reasoning benchmarks, including mathematics, code generation, and multi-step problem solving. Its instruction-tuning enables it to follow complex, nuanced prompts with high fidelity, reducing the need for extensive prompt engineering.

Multilingual Capability

The model provides robust support for English, French, German, Spanish, and Italian, with strong performance across all supported languages. This makes it suitable for international products and services targeting diverse user bases.

Advanced Coding Proficiency

With specialized training on code-related tasks, Mixtral 8x22B Instruct excels at generating, debugging, and explaining code across multiple programming languages including Python, JavaScript, Java, C++, and more. It supports both code completion and full-function generation workflows.

Function Calling and Tool Use

The model includes native function calling capabilities, allowing it to interact with external APIs, databases, and tools. This feature is critical for building agentic systems and workflow automation platforms that require LLMs to take actions beyond text generation.

Production-Ready Latency

Despite its size, the sparse activation pattern enables competitive inference speeds suitable for real-time applications. Developers can expect response times that support interactive user experiences without the lag associated with dense models of comparable capability.

Use Cases for Mixtral 8x22B Instruct

Intelligent Customer Support Chatbots

Deploy Mixtral 8x22B Instruct API to power sophisticated customer service platforms that understand context, handle multi-turn conversations, and provide accurate answers across technical and non-technical domains. The extended context window enables the model to reference entire conversation histories and support documentation simultaneously.

Advanced Code Generation and Review

Integrate Mixtral 8x22B Instruct into development environments, CI/CD pipelines, and code review tools to generate production-quality code, identify bugs, suggest optimizations, and explain complex codebases. The model's proficiency across multiple programming languages makes it valuable for polyglot development teams.

Document Processing and Summarization

Leverage the 65,536-token context window to process lengthy contracts, research papers, technical documentation, and business reports. Mixtral 8x22B Instruct can extract key insights, generate executive summaries, answer questions about document content, and compare multiple documents simultaneously.

Workflow Automation and Data Extraction

Use function calling capabilities to build intelligent automation systems that can read emails, extract structured data from unstructured text, update CRM systems, generate reports, and orchestrate multi-step workflows based on natural language instructions.

Enterprise Knowledge Base and Search

Deploy Mixtral 8x22B Instruct as an intelligent layer over internal documentation, wikis, and knowledge repositories. The model can synthesize information from multiple sources, provide contextualized answers to employee questions, and assist with onboarding and training programs.

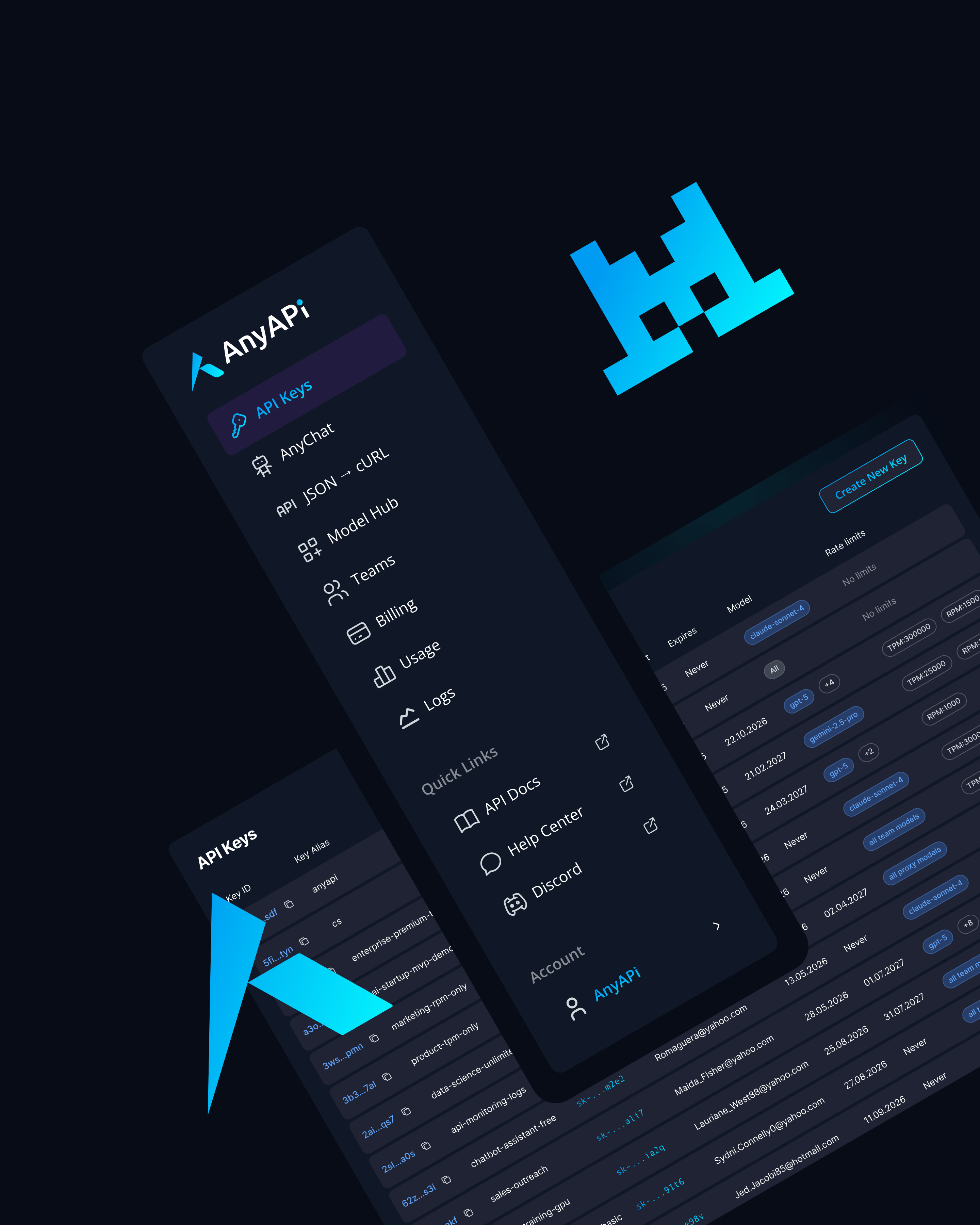

Why Use Mixtral 8x22B Instruct via AnyAPI.ai

AnyAPI.ai provides streamlined access to Mixtral 8x22B Instruct alongside dozens of other leading language models through a single, unified API interface. Instead of managing separate API keys, authentication systems, and billing relationships with multiple AI providers, developers can integrate once and access the entire ecosystem of LLMs.

The platform offers one-click onboarding with no vendor lock-in, allowing teams to switch between models based on performance, cost, or capability requirements without rewriting integration code. Usage-based billing provides transparent pricing and cost control, eliminating the complexity of navigating different pricing structures across providers.

AnyAPI.ai delivers production-grade infrastructure including automatic failover, load balancing, and request routing that ensures high availability for mission-critical applications. Developer tools including detailed analytics, usage dashboards, and debugging capabilities provide visibility into model performance and cost optimization opportunities.

Unlike alternative aggregation platforms, AnyAPI.ai emphasizes superior provisioning with dedicated capacity options for enterprise customers, unified access patterns that reduce integration complexity, responsive technical support, and granular analytics that help teams understand model behavior and optimize prompt strategies.

Start Using Mixtral 8x22B Instruct via API Today

Mixtral 8x22B Instruct represents a breakthrough in accessible, powerful AI for developers and enterprises. Its combination of flagship-level performance, cost efficiency through sparse activation, and massive context capacity makes it an exceptional choice for teams building sophisticated AI applications at scale.

Whether you are a startup looking to differentiate with advanced AI features, a development team seeking reliable code generation capabilities, or an enterprise automating complex workflows, Mixtral 8x22B Instruct delivers the intelligence and flexibility required for production success.

Integrate Mixtral 8x22B Instruct via AnyAPI.ai and start building today. Sign up, get your API key, and launch in minutes with access to one of the most powerful open-weight models available. Join thousands of developers who trust AnyAPI.ai for scalable, reliable LLM API access.